Measuring Clinical Decision Support Influence on Evidence-Based Nursing Practice

Purpose/Objectives: To measure the effect of clinical decision support (CDS) on oncology nurse evidence-based practice (EBP).

Design: Longitudinal cluster-randomized design.

Setting: Four distinctly separate oncology clinics associated with an academic medical center.

Sample: The study sample was comprised of randomly selected data elements from the nursing documentation software. The data elements were patient-reported symptoms and the associated nurse interventions. The total sample observations were 600, derived from a baseline, posteducation, and postintervention sample of 200 each (100 in the intervention group and 100 in the control group for each sample).

Methods: The cluster design was used to support randomization of the study intervention at the clinic level rather than the individual participant level to reduce possible diffusion of the study intervention. An elongated data collection cycle (11 weeks) controlled for temporary increases in nurse EBP related to the education or CDS intervention.

Main Research Variables: The dependent variable was the nurse evidence-based documentation rate, calculated from the nurse-documented interventions. The independent variable was the CDS added to the nursing documentation software.

Findings: The average EBP rate at baseline for the control and intervention groups was 27%. After education, the average EBP rate increased to 37%, and then decreased to 26% in the postintervention sample. Mixed-model linear statistical analysis revealed no significant interaction of group by sample. The CDS intervention did not result in an increase in nurse EBP.

Conclusions: EBP education increased nurse EBP documentation rates significantly but only temporarily. Nurses may have used evidence in practice but may not have documented their interventions.

Implications for Nursing: More research is needed to understand the complex relationship between CDS, nursing practice, and nursing EBP intervention documentation. CDS may have a different effect on nurse EBP, physician EBP, and other medical professional EBP.

Jump to a section

To achieve the best patient outcomes, meet patient expectations, and achieve government mandates for improving patient outcomes and increasing the quality of health care, integrating the highest level of evidence into practice is integral (Berner, 2009; Health and Medicine Division of the National Academies of Sciences, Engineering, and Medicine [HMD], 2012; Mitchell, Beck, Hood, Moore, & Tanner, 2007). Clinical decision support (CDS) is an intervention specifically designed to increase evidence-based practice (EBP) integration by displaying pertinent evidence when healthcare professionals and patients make healthcare decisions (Brokel, 2009). Healthcare organizations can implement CDS in various ways, depending on technology capabilities. CDS can be provided via email or paper reminders and also can be placed in computerized physician order entry or documentation software. Research demonstrates that CDS is most effective when embedded in software and displayed during decision making (Brokel, 2009). Specifically, CDS should provide the right information, for the right person, in the right format, at the right time, in the right electronic medium (Berner, 2009; Brokel, 2009; Osheroff, 2010). According to Anitha and Rajagopalan (2011), CDS improves the quality of health care. The Health Information Technology for Economic and Clinical Health (HITECH) Act and the HMD (2012) report recommend implementing CDS and other technologies to assist clinicians in delivering high-quality health care and minimizing medical errors.

Notwithstanding the mandates and expectations for EBP and the availability of evidence-based interventions for symptom management, research suggests that oncology nurse EBP rates are less than 50% (Saca-Hazboun, 2009). Abundant literature during the past 20 years describes barriers limiting nursing EBP and variables influencing EBP (Burns & Grove, 2011; Carlson & Plonczynski, 2008; Marchionni & Ritchie, 2008). HITECH certification requires some use of CDS, but research is inconsistent on the effects of CDS on nursing practice (Ash et al., 2012). Bryan and Boren (2008) postulated that the inconsistency in findings was related to poor technology implementation.

As CDSS will likely continue to be at the forefront of the march toward effective standards-based care, more work needs to be done to determine effective implementation strategies for the use of CDSS across multiple settings and patient populations. (p. 79)

A literature review on CDS was conducted to provide a foundation for determining the method and design for the current study. Berner (2009) recommended implementing CDS at the point of practitioner and patient decision making. Others have recommended that CDS contain only the most critical information for the decision-making process (Bauldoff, Kirkpatrick, Sheets, Mays, & Curran, 2008; Bryan & Boren, 2008; Baysari, Westbrook, Braithwaite, & Day, 2011). Davis and Pavur (2011) conducted a systematic review and found that CDS enhanced EBP rates in a clinic setting. Anitha and Rajagopalan (2011) implemented CDS in the electronic health record at the point of decision making and found increased EBP and increased quality of patient care in an outpatient medical clinic. Bryan and Boren (2008) conducted a systematic review of CDS and found that, of the 17 studies included in the review, 9 showed that CDS increased EBP, 4 had variable results, and 4 showed that CDS did not improve EBP. Anchala et al. (2012) conducted a meta-analysis of cluster CDS and found an insignificant effect of CDS. Gurwitz et al. (2008) conducted a cluster randomized, controlled trial and found no significant difference between the control group and the intervention group using CDS. Hemens et al. (2011) conducted a systematic review and found that CDS improved EBP in 37 of 59 studies. Davis and Pavur (2011) and Dulko, Hertz, Julien, Beck, and Mooney (2010) recommended coupling audit and feedback interventions with judicious CDS implementation to support increased EBP.

No published research has examined the influence of CDS on oncology nursing EBP. The purpose of this research was to test for an increase in evidence-based nursing intervention documentation after implementation of CDS in the nursing electronic documentation software. Specifically, this research measured the difference in nursing EBP rates with and without CDS.

Methods

Using the evidence from the literature reviewed, the design of the current study included CDS in the form of drop-down boxes to offer nurses the most critical evidence-based information at the time of patient–clinician decision making. To isolate and test the effect of the CDS, the design of the current study excluded other influencing variables such as audit and feedback. This cluster randomized trial tested if the low oncology nurse EBP rate, as documented by Saca-Hazboun (2009), was influenced by lack of access to evidence-based interventions during nurse–patient interactions.

This cluster randomized trial design was used to determine the effect of CDS on nurse EBP. The study intervention (CDS) was randomly assigned by clinic rather than by individual nurse to reduce diffusion of the study intervention. Two oncology clinics were clustered in the control group, and two clinics were in the intervention group. The outcome was individual nurses’ documentation of evidence-based interventions. The hypothesis was that oncology nurses would show an increase in the use of EBP after using CDS as compared to a control group without CDS. The study intervention appeared when the nurse in the intervention group documented a symptom prompting the nurse to have a conversation with the patient about the evidence-based interventions for that symptom. The nurse EBP rate was measured in a randomly selected sample of records within the three data collection periods. The current study contained five phases: a baseline sample, education, a posteducation sample, study intervention implementation, and a postintervention sample. This study was approved by the institutional review board of the Vanderbilt University Medical Center in Nashville, Tennessee.

Setting

The research was conducted in four adult oncology clinics associated with the Vanderbilt University Medical Center. The study sample included a small percentage of the available patient records during the date range for each sample period. All four clinics were outpatient cancer infusion clinics in four separate locations as many as 20 miles apart. The control group had 24 nurses and the intervention group had 26 for a total of 50 nurses. All nurses in the control and intervention groups used the same electronic documentation software. The CDS drop-down boxes were configured to display EBP information for intervention clinic nurses and not for control clinic nurses.

Sample

A total of 600 nurse interventions associated with one of the four patient-reported symptoms (constipation, diarrhea, fatigue, and pain) were extracted from the electronic data warehouse (EDW). The inclusion criteria were: (a) the first reported symptom during the sample period for constipation, diarrhea, fatigue, and/or pain; (b) the encounter occurred during one of the two-week sample periods (baseline, posteducation, and postintervention); and (c) the encounter was associated with one of the approved International Classification of Diseases–9 cancer diagnoses. Two hundred interventions were randomly selected from all of the encounters meeting the inclusion criteria within each of the study periods (100 from the control clinics and 100 from the intervention clinics). The diverse nature of patients, nurses, and organizational culture in the different clinics grouped together in two clusters supports generalization of the study results.

Data Collection

All sample data were randomly selected from the EDW using the inclusion and exclusion criteria. Each sample was drawn from a two-week sampling period. The nursing EBP rate was manually coded by a single investigator, and each data point was reviewed on two separate occasions to verify accuracy.

Intervention

The study intervention was CDS in the form of drop-down boxes created from the Oncology Nursing Society (ONS) Putting Evidence Into Practice (PEP) guidelines for four cancer-related symptoms.

During the past decade, ONS has developed PEP evidence-based guidelines to manage common symptoms experienced by patients during and after cancer treatment. The PEP guidelines have become standard oncology nursing practice in the United States (Gobel, Beck, & O’Leary, 2006). The PEP guidelines are organized by symptom and contain interventions that are divided into three categories and six levels of evidence. The three categories are arranged similar to the colors in a traffic light. Interventions based on evidence in green are the highest level of evidence. Interventions based on evidence in yellow signify caution and the mid-evidence level. Interventions based on evidence in red signify that the evidence level is low or not recommended for practice.

The two levels of evidence in the green category are “recommended for practice” and “likely to be effective.” The two levels of evidence in the yellow category are “benefits balanced with harm” and “effectiveness not established.” The two levels of evidence in the red category are “effectiveness unlikely” and “not recommended for practice.” The study intervention (CDS in the form of evidence-based drop-down boxes) displayed interventions that were taken from the PEP interventions in the green and yellow categories.

When the nurse documented one of the four symptoms, the CDS with recommended interventions appeared adjacent to the symptom. The CDS provided the intervention group nurses with a one-click, evidence-based documentation option. The CDS was configured in the documentation system to appear exactly the same way every time the nurse charted one of the four symptoms (constipation, diarrhea, fatigue, and pain) so the fidelity of the CDS was maintained.

Procedure

After the baseline sample was collected, education on the PEP interventions for constipation, diarrhea, fatigue, and pain was conducted with the nurses in both groups. This comprised a brief (60-minute) education session delivered live during staff meetings. This education was provided to ensure that all nurses working in both groups were familiar with the PEP guidelines for the four selected symptoms. All nurses received the same education. In addition, the intervention group nurses received 10 minutes of technical instruction on the CDS drop-down boxes.

After completing the education, the second sample (posteducation) was collected for a two-week date range using the same criteria as was used for the baseline sample. Subsequently, the CDS intervention was implemented in the intervention clinics. During the seven-week CDS implementation period, nurses in the control group documented interventions using free text in an open text field. The final data sample was collected following the seven-week CDS implementation period, again using the same criteria as was used for the previous two samples.

Data Analysis

Frequency distributions were conducted in SPSS®, version 21.0, to summarize nurse demographic characteristics and generate nurse EBP rates for each of the three samples: baseline (200 observations), posteducation (200 observations), and postintervention (200 observations). Chi-square tests of independence were used to test for differences in demographic characteristics between the two study groups. Individual nurses were not identified in the current study. Mixed-level general linear modeling analysis was used because nurses practicing within clinics were assumed to practice similarly and the standard errors used for testing study hypotheses needed to be adjusted for this lack of independence. According to Abad, Litière, and Molenberghs (2010), statistical analysis such as the mixed model analysis required a sample size of at least 350. A sample smaller than 350 placed the current study at risk for a type II error; however, a very large sample would place it at risk for a type I error. The sample size recommendations for mixed model analysis included 60 observations per group because one variable was a predictor (independent) and one variable was dependent, and the nested nurse group correlations were assumed to influence statistical power (Abad et al., 2010; Feng, McLerran, & Grizzle, 1996).

The critical statistical test of CDS effect on nursing EBP within this analysis was the interaction of group (control or intervention) with time of data sampling (baseline, posteducation, and postintervention). A probability of 0.05 (p < 0.05) was used for determining statistical significance. A positive episode of EBP occurred when a nurse documented a PEP-recommended intervention for a related symptom.

Findings

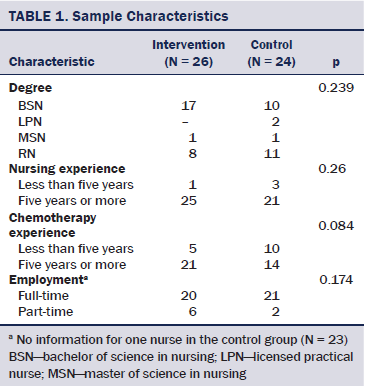

Sample Characteristics

All nurses in the current study were chemotherapy-certified. Other characteristics of the study sample are summarized in Table 1. No statistically significant demographic differences between the nurses in the intervention and control groups were observed (p > 0.05).

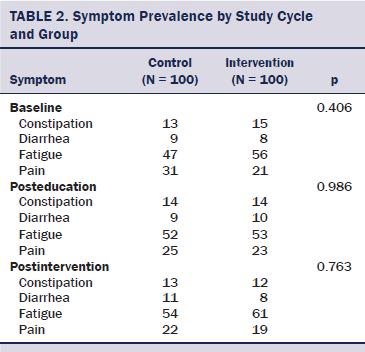

Symptom Prevalence

The distributions of the included symptoms at baseline, posteducation, and postintervention are summarized in Table 2. The most commonly observed symptom in each study period was fatigue (56%–61%), followed by pain (19%–21%). No statistically significant differences were seen in the symptom distributions between the intervention and control clinics (p > 0.05).

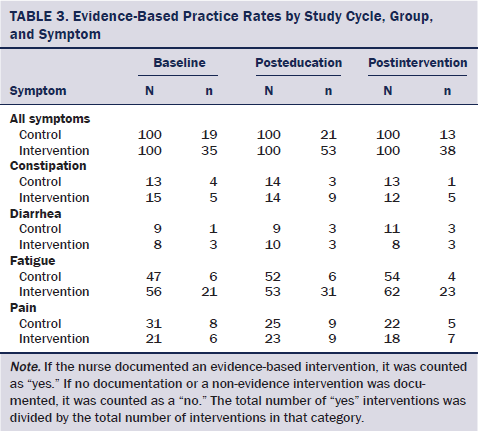

Use of evidence-based practice: Summaries of the rates within each study group at each time of assessment are summarized in Table 3. The intervention group’s EBP rate was greater than the control group throughout the study, resulting in a statistically significant main effect of study group (F[1, 515] = 55.14, p < 0.001). In addition, a statistically significant main effect of the sample was seen in that both groups increased EBP rates after education and then decreased back to or below baseline rates in the last sample (F[2, 594] = 3.49, p = 0.031). However, no statistically significant interaction effect was seen of the study group and time of assessment (F[2, 593] = 1.37, p = 0.255). Therefore, education demonstrated a short-lived increase in EBP, whereas CDS showed no positive effect on the EBP rate.

Post-hoc analyses per symptom: Prevalence of constipation and diarrhea symptoms were low. No statistically significant effects for the symptoms of constipation, diarrhea, and pain were observed, other than a main effect of group for constipation (F[1, 14] = 7.62, p = 0.016). Beginning prior to study initiation and throughout the study, the intervention group had higher EBP rates for constipation than those seen in the control group (overall rates: control = 8 of 40; intervention = 19 of 41). The same pattern as that observed for all symptoms was observed for fatigue. In addition to the same statistically significant main effect of study group observed prior to the interventions and throughout the study (overall rates: control = 16 of 153, 10%; intervention = 75 of 171, 44%; F[1, 313] = 78.27, p < 0.001), a similar main effect of time of assessment was observed in the analysis of all of the symptoms (F[2, 317] = 3.78, p = 0.024). Overall EBP rates for both groups combined were 26% at baseline (27 of 103), increased to 35% (37 of 105) after the education sessions, and dropped back to baseline levels after the CDS (27 of 116, 23%).

Discussion

The statistically significant change in EBP rate occurred in both groups after education, indicating that the education sessions had a short-lived positive effect on EBP rates. Contrary to the authors’ hypothesis, the EBP rate declined after CDS was implemented in the intervention and control groups. The results showed that CDS did not improve the nursing EBP rate.

Although the research design contained many recommendations from other scientists, the design also controlled for other known support strategies to test the effect of CDS alone on EBP. The study design controlled for influencing variables such as audit and feedback, managerial support, and continued nurse education by eliminating those variables from the study design and study environment. The expected small effect of CDS on nurses’ EBP in the current study may have been exacerbated by the study design, which intentionally excluded supportive strategies in an attempt to measure the effect of CDS alone on nurse EBP. The results of this study possibly may have shown a small effect of CDS on EBP if the additional strategies known to affect clinician behavior had been included.

The results of the current study demonstrated that CDS implementation aimed at increasing EBP needs to be combined with support strategies such as audit and feedback (Dulko et al., 2010). Dulko et al. (2010) found that auditing documentation for compliance with evidence-based interventions and offering individual feedback on the audits improved compliance with EBP guidelines by as much as 43%. Additional research should include audit and feedback supportive strategies to improve compliance with evidence-based guidelines.

EBP is a complex process, and no easy formula for success exists. Human factors may play as much of a role in CDS success as the type and design of CDS and organizational factors (Jenkins & Calzone, 2007; Rogers, 2004; Timmins, 2008). Grimshaw et al. (2006) found that supportive strategies, such as reminders, audit and feedback, and educational interventions, increased EBP by only 10%. Mollon et al. (2009) conducted a systematic review and found that CDS increased EBP but noted that few high-quality studies show improvement in patient outcomes.

The results from this research were similar to previous research in which CDS had no effect or a negative effect on EBP (Anchala et al., 2012; Gurwitz et al., 2008; Hemens et al., 2011). Some studies showed that CDS increased EBP by a small effect size (Chaudhry et al., 2006; Pearson et al., 2009; Randell, Mitchell, Dowdning, Cullum, & Thompson, 2007), and some studies reported moderate effect sizes (Cheung et al., 2012; Damiani et al., 2010). Of note is that many of the study designs discussed in this literature review included additional strategies that supported or improved CDS adoption, such as audit and feedback loops, reminders, and managerial or mentor support.

The unique element in the current study is that it focused on CDS in nursing practice, and the bulk of CDS research focuses on physician practice. In addition, research focusing on physician practice CDS used interventions that were considered to be an essential element of the physician plan of care; the CDS documentation in the current research was not essential to third-party payment or plan of care. Lastly, members of the healthcare team use physician plan of care and associated interventions as a guide for patient care and communication, but the same team members may not use the nurse documentation in the same way.

Whereas the physician CDS and associated intervention documentation are integral to reimbursement and team communication, the same is not true for oncology nursing documentation. Although the nurses may have integrated evidence-based interventions in practice, perhaps some nurses chose not to document interventions because they perceived that the CDS documentation did not add value to healthcare team communications.

Rogers (2004) asserted that the process for organizational and individual change focused on communication with the people who needed to change in each critical phase of the change process (Jenkins & Calzone, 2007; Timmins, 2008). Because coaching and other focused communication are known to influence an increase in EBP (Dulko et al., 2010; Tolson, Booth, & Lowndes, 2008), those strategies were excluded from the study design to measure the effect of CDS alone on EBP.

Rogers (2004) postulated that people use five personal factors to decide for or against personal behavior change: relative advantage, compatibility, complexity or simplicity, trialability, and observability. Although the CDS in the current study meets the criteria for driving change in the first four factors, the fifth factor may have been a problem. The CDS had a clear advantage because it offered one-click documentation (compatible and simple). Documenting interventions using CDS took less time than the control group method of documenting by manual typing (trialability). The last factor was the problem that some nurses questioned the value (observability) of the documentation. Some nurses stated that they believed no one would read nursing intervention documentation.

The lack of observability may have affected the implementation phase in which the nurse actually used the intervention in practice but did not document the intervention because of no perceived benefit (Rogers, 2004). This coincides with Lewin’s theory that the drivers for change must outweigh the drivers resisting change (Doolin, Quinn, Bryant, Lyons, & Kleinpell, 2011).

Lewin’s theory also required adding drivers that would force a change in nursing behavior and remove the ability to continue old behaviors (Doolin et al., 2011). An example of adding a driver to change behavior in the research design is if the nurses were required to document the evidence-based intervention. The organizational policies and software structure were not flexible enough to accommodate these two principles in Lewin’s theory. Some nurses may not have been motivated to change without the organizational drivers supporting change.

HMD (2012) warned scientists that technology solutions are a small piece of the efforts to increase EBP and other interventions are necessary to sustain changes in behavior. In reviewing the literature, several studies offer explanation for the negative results in the current research. Three key components of the literature review were removal of technology associated with old non-evidence behaviors, required behavior change to support EBP, and rapid audit and feedback loops (Ash et al., 2012; Doolin et al., 2011; Dulko et al., 2010; Tolson et al., 2008). The current study excluded those strategies in an effort to control for influencing variables. Additional research including the supporting strategies, such as audit and feedback, likely would produce different results.

Implications for Nursing Practice and Research

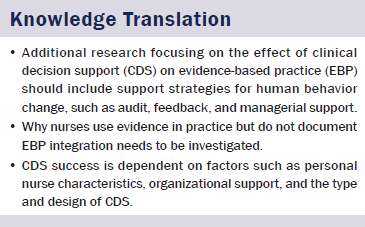

Additional research focusing on the effect of CDS on EBP should include support strategies for human behavior change. According to Gorelick (2010), providing CDS at the point of decision making is indispensable to achieve higher rates of EBP. The results of the current research show that implementing CDS alone does not increase oncology nursing EBP. Additional research should include all of the recommendations for CDS success (Ash et al., 2012; Berner, 2009; Osheroff, 2010), as well as the variables supporting practice change and EBP (Bryan & Boren, 2008; Davis & Pavur, 2011; Jenkins & Calzone, 2007; Rogers, 2004; Timmins, 2008). Additional designs should contain electronic random sampling methods, which offer a higher level of research rigor and render the results less biased than the self-reported surveys.

Additional research also should include the precepts of Lewin’s theory requiring the old behaviors to be removed from the workflow and new behaviors to be reinforced. Reinforcing and repeating the new EBP behaviors converts the change into habit in daily workflow (Schriner et al., 2010). One way to reinforce the use of evidence-based interventions is to provide audit and feedback for individual nurses (Dulko et al., 2010). Design of additional research should include implementation of drivers for nurse behavior change, such as policies requiring nurse EBP documentation, incentives for EBP, and audit and feedback for individual accountability (Anchala et al., 2012; Davis & Pavur, 2011; Doolin et al., 2011; Dulko et al., 2010; Hemens et al., 2011).

Conclusion

The results demonstrated that CDS implementation aimed at increasing EBP is a complex process and supportive strategies with CDS are needed to change nurse behavior. Personal nurse characteristics, organizational support, drivers supporting change, external reporting requirements, the type and design of CDS, communication about the purpose of CDS, and connection to patient outcomes influence the success of CDS and higher nursing EBP rates.

References

Abad, A.A., Litière, S., & Molenberghs, G. (2010). Testing for misspecification in generalized linear mixed models. Biostatistics, 11, 771–786.

Anchala, R., Pinto, M.P., Shroufi, A., Chowdhury, R., Sanderson, J., Johnson, L., . . . Franco, O.H. (2012). The role of Decision Support System (DSS) in prevention of cardiovascular disease: A systematic review and meta-analysis. PLOS One, 7, e47064. doi:10.1371/journal.pone.0047064

Anitha, B.B., & Rajagopalan, S.P. (2011). Computer decision support systems for evidence-based medicine: An overview. European Journal of Scientific Research, 50, 439–447.

Ash, J.S., Sittig, D.F., Guappone, K.P., Dykstra, R.H., Richardson, J., Wright, A., . . . Middleton, B. (2012). Recommended practices for computerized clinical decision support and knowledge management in community settings: A qualitative study. BMC Medical Informatics and Decision Making, 12(6), 1–19. doi:10.1186/1472-6947-12-6

Bauldoff, G.S., Kirkpatrick, B., Sheets, D.J., Mays, B., & Curran, C.R. (2008). Implementation of handheld devices. Nurse Educator, 33, 244–248. doi:10.1097/01.NNE.0000334788.22675.fd

Baysari, M., Westbrook, J., Braithwaite, J., & Day, R.O. (2011). The role of computerized decision support in reducing errors in selecting medicines for prescription: Narrative review. Drug Safety, 34, 289–298. doi:10.2165/11588200-000000000-00000

Berner, E.S. (2009). Clinical decision support systems: State of the art. Retrieved from https://healthit.ahrq.gov/sites/default/files/docs/page/09-0069-EF_1.pdf

Brokel, J.M. (2009). Infusing clinical decision support interventions into electronic health records. Urologic Nursing, 29, 345–352.

Bryan, C., & Boren, S.A. (2008). The use and effectiveness of electronic clinical decision support tools in the ambulatory/primary care setting: A systematic review of the literature. Informatics in Primary Care, 16, 79–91.

Burns, N., & Grove, S.K. (2011). Understanding nursing research: Building an evidence-based practice (5th ed.). Maryland Heights, MO: Elsevier Saunders.

Carlson, C.L., & Plonczynski, D.J. (2008). Has the BARRIERS Scale changed nursing practice? An integrative review. Journal of Advanced Nursing, 63, 322–333. doi:10.1111/j.1365-2648.2008.04705.x

Chaudhry, B., Wang, J., Wu, S., Maglione, M., Mojica, W., Roth, E., . . . Shekelle, P.G. (2006). Systematic review: Impact of health information technology on quality, efficiency, and costs of medical care. Annals of Internal Medicine, 144, 742–752. doi:10.7326/0003-4819-144-10-200605160-00125

Cheung, A., Wier, M., Mayhew, A., Kozloff, M., Brown, K., & Grimshaw, J. (2012). Overview of systematic reviews of the effectiveness of reminders in improving healthcare professional behavior. Systematic Reviews, 1(36), 2–8. doi:10.1186/2046-4053-1-36

Damiani, G., Pinnarelli, L., Colosimo, S.C., Almiento, R., Sicuro, L., Glasso, R., . . . Ricciardi, W. (2010). The effectiveness of computerized clinical guidelines in the process of care: A systematic review. BMC Health Services Research. Retrieved from http://bmchealthservres.biomedcentral.com/articles/10.1186/1472-6963-10…

Davis, M.A., & Pavur, R.J. (2011). The relationship between office system tools and evidence-based care in primary care physician practice. Health Services Management Research, 24, 107–113. doi:10.1258/hsmr.2010.010019

Doolin, C.T., Quinn, L.D., Bryant, L.G., Lyons, A.A., & Kleinpell, R.M. (2011). Family presence during cardiopulmonary resuscitation: Using evidence-based knowledge to guide the advanced practice nurse in developing formal policy and practice guidelines. Journal of the American Association of Nurse Practitioners, 23, 8–14. doi:10.1111/j.1745-7599.2010.00569.x

Dulko, D., Hertz, E., Julien, J., Beck, S., & Mooney, K. (2010). Implementation of cancer pain guidelines by acute care nurse practitioners using an audit and feedback strategy. Journal of the American Association of Nurse Practitioners, 22, 45–55. doi:10.1111/j.1745-7599.2009.00469.x

Feng, Z., McLerran, D., & Grizzle, J. (1996). A comparison of statistical methods for clustered data analysis with Gaussian error. Statistics in Medicine, 15, 1793–1806. doi:10.1002/(SICI)1097-0258(19960830)15:16<1793::AID-SIM332>3.0.CO;2-2

Gobel, B.H., Beck, S.L., & O’Leary, C. (2006). Nursing-sensitive patient outcomes: The development of the Putting Evidence Into Practice resources for nursing practice. Clinical Journal of Oncology Nursing, 10, 621–624. doi:10.1188/06.CJON.621-624

Gorelick, C.S. (2010). Personal digital assistants: Their influence on clinical decision-making and the influence of evidence-based practice in baccalaureate nursing students. Retrieved from http://pqdtopen.proquest.com/doc/250777184.html?FMT=ABS

Grimshaw, J., Eccles, M., Thomas, R., MacLennan, G., Ramsay, C., Fraser, C., & Vale, L. (2006). Toward evidence-based quality improvement: Evidence (and its limitations) of the effectiveness of guideline dissemination and implementation strategies 1966–1998. Journal of General Internal Medicine, 21(Suppl. 2), S14–S20. doi:10.1111/j.1525-1497.2006.00357.x

Gurwitz, J.H., Field, T.S., Rochon, P., Judge, J., Harrold, L.R., Lee, M., . . . Bates, D.W. (2008). Effect of computerized provider order entry with clinical decision support on adverse drug events in the long-term care setting. Journal of the American Geriatrics Society, 56, 2225–2233. doi:10.1111/j.1532-5415.2008.02004.x

Health and Medicine Division of the National Academies of Sciences, Engineering, and Medicine. (2012). Committee on Patient Safety and Health Information Technology. Retrieved from http://www.ncbi.nlm.nih.gov/books/NBK189666

Hemens, B.J., Holbrook, A., Tonkin, M., Mackay, J.A., Weise-Kelly, L., Navarro, T., . . . Haynes, R.B. (2011). Computerized clinical decision support systems for drug prescribing and management: A decision-maker-researcher partnership systematic review. Implementation Science, 6, 89–105. doi:10.1186/1748-5908-6-89

Jenkins, J., & Calzone, K.A. (2007). Establishing the essential nursing competencies for genetics and genomics. Journal of Nursing Scholarship, 39, 10–16. doi:10.1111/j.1547-5069.2007.00137.x

Marchionni, C., & Ritchie, J. (2008). Organizational factors that support the implementation of a nursing best practice guideline. Journal of Nursing Management, 16, 216–264. doi:10.1111/j.1365-2834.2007.00775.x

Mitchell, S.A., Beck, S.L., Hood, L.E., Moore, K., & Tanner, E.R. (2007). Putting Evidence Into Practice: Evidence-based interventions for fatigue during and following cancer and its treatment. Clinical Journal of Oncology Nursing, 11, 99–113. doi:10.1188/07.CJON.99-113

Mollon, B., Chong, J.R., Holbrook, A.M., Sung, M., Thabane, L., & Foster, G. (2009). Features predicting the success of clinical decision support for prescribing: A systematic review of randomized controlled trials. BMC Medical Informatics and Decision Making. Retrieved from http://bmcmedinformdecismak.biomedcentral.com/articles/10.1186/1472-694…

Osheroff, J.A. (2010). Structuring care recommendations for clinical decision support. Retrieved from https://healthit.ahrq.gov/sites/default/files/docs/activity/structuring…

Pearson, S.A., Moxey, A., Robertson, J., Hains, I., Williamson, M., Reeve, J., & Newby, D. (2009). Do computerized clinical decision support systems for prescribing change practice? A systematic review of the literature (1990–2007). BMC Health Services Research, 9, 154. doi:10.1186/1472-6963-9-154

Randell, R., Mitchell, N., Dowdning, D., Cullum, N., & Thompson, C. (2007). Effects of computerized decision support systems on nursing performance and patient outcomes: A systematic review. Journal of Health Services Research and Policy, 12, 242–249.

Rogers, E.M. (2004). Diffusions of innovations (5th ed.). New York, NY: Free Press.

Saca-Hazboun, H. (2009). Putting Evidence Into Practice. Outcomes of using ONS PEP resources. ONS Connect, 24, 8–11.

Schriner, C., Deckelman, S., Kubat, M., Lenkay, J., Nims, L., & Sullivan, D. (2010). Collaboration of nursing faculty and college administration in creating organizational change. Nursing Education Perspectives, 31, 381–386. doi:10.1043/1536-5026-31.6.381

Timmins, F. (2008). Communication skills: Challenges encountered. Nurse Prescribing, 6, 11–14. doi:10.12968/npre.2008.6.1.28112

Tolson, D., Booth, J., & Lowndes, A. (2008). Achieving evidence-based nursing practice: Impact of the Caledonian Development Model. Journal of Nursing Management, 16, 682–691. doi:10.1111/j.1365-2834.2008.00889.x

About the Author(s)

Cortez is an information services consultant for Health Information Systems and Technologies at the Vanderbilt University Medical Center, Dietrich is a professor in the School of Nursing and School of Medicine at Vanderbilt University and Vanderbilt Ingram Cancer Center, and Wells is the director of Nursing Research at the Vanderbilt University Medical Center, all in Nashville, TN. No financial relationships to disclose. All authors contributed to the conceptualization and design, data collection, statistical support analysis, and manuscript preparation. Cortez can be reached at susan.cortez@vanderbilt.edu, with copy to editor at ONFEditor@ons.org. Submitted June 2015. Accepted for publication October 20, 2015.